Decentralize or Die (Part 1)

Decentralize - if you should - or die. Thank you to Sal (from EV3), Kollan (from MetaDAO), Neil (from DAWN), and Andrew (from Jito Foundation) for thoughtful feedback and review.

Having spoken to many individuals and teams across Solana about restaking and node consensus networks (NCNs), it is evident that Solana developers are extremely pragmatic. Oftentimes, I find that teams want to decentralize at some point, however, it is not a priority at the moment. In other words, there tends to be a distance between building a valuable product and decentralizing it, which varies depending on the product or service.

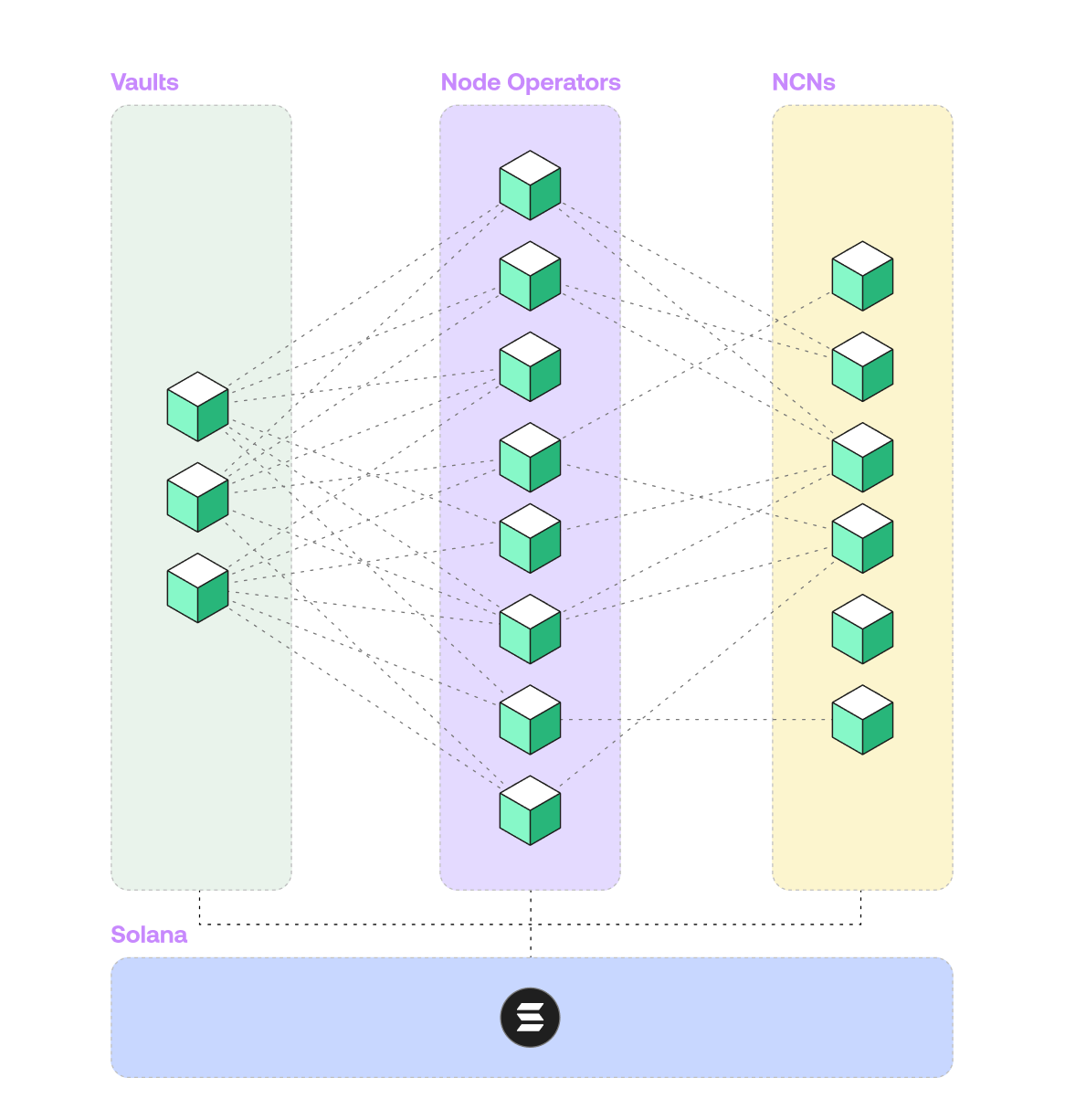

Decentralization is paramount in the context of MEV and building distributed financial systems and increasingly important in the context of other networked services. We built the Jito (Re)Staking protocol because there are aspects of Jito Network that can benefit from incremental decentralization. It’s a framework for developers to use the Solana runtime for any arbitrary proof of stake system and accelerate decentralization, in the expectation that, as the ecosystem continues to mature, developers will eventually transition to prioritizing resiliency over product iteration speed and seek to build custom decentralized solutions that fits the needs of their protocol.

This article discusses some ideas and considerations for decentralizing various protocols on Solana. In a future article, I’ll discuss different ways of decentralizing services into protocols. I’ll also describe the Jito (Re)Staking protocol in more detail and how a few teams are using it to build decentralized protocols that enhance their services.

TLDR

Decentralization for the sake of decentralization is a poor reason to decentralize a protocol.

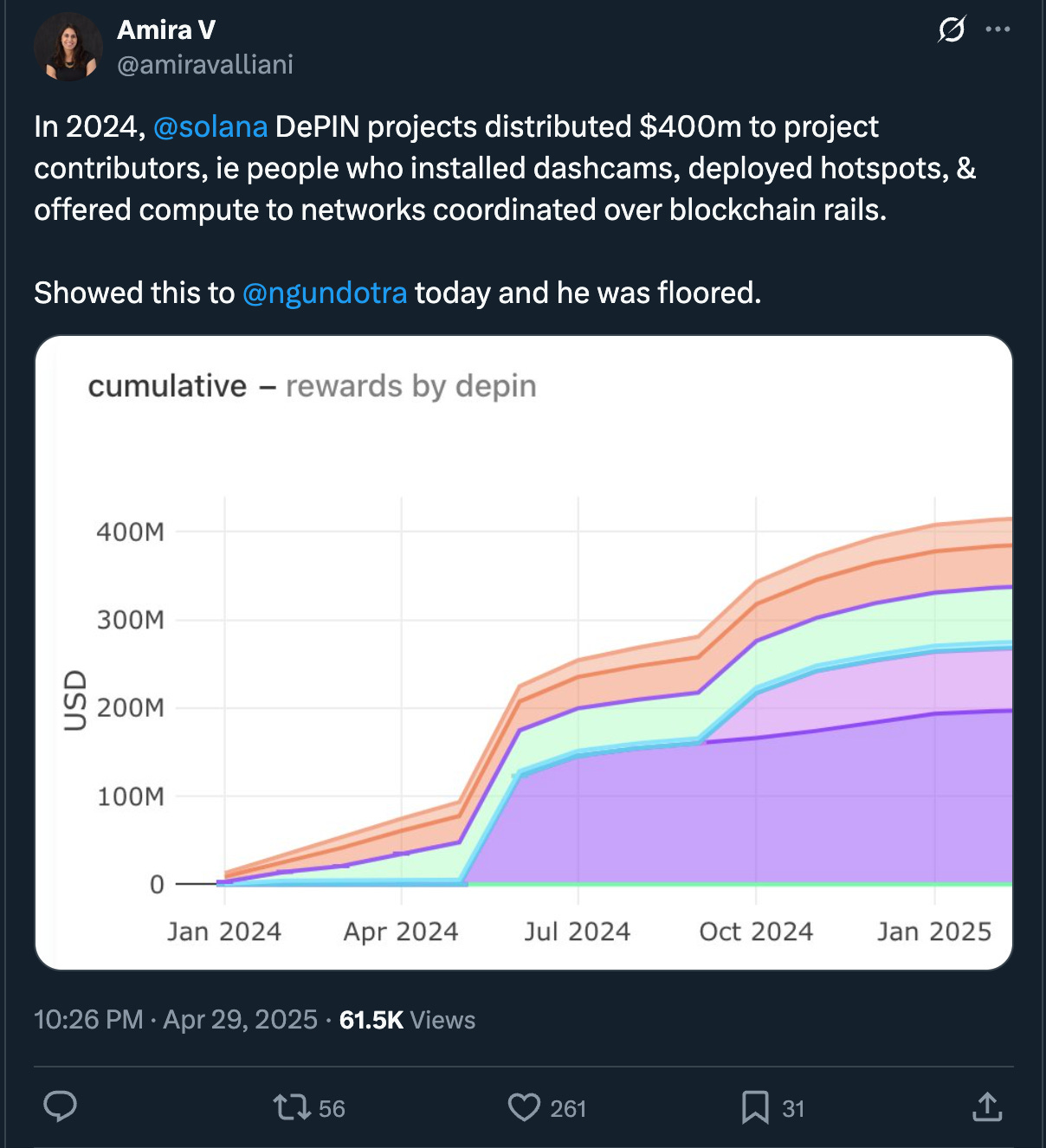

If a distributed service is cheaper than its centralized counterpart, then it can be decentralized, which means it can benefit from greater security (lower trust assumptions) and utilizing a token to bootstrap and maintain the network.

Verification mechanisms for DePINs or redundant services to secure much larger networks are great reasons to build a decentralized protocol.

DePINs can separate verification from execution and get better pricing for specific verifiable properties, such as location and bandwidth.

Redundant services can strengthen the security of larger networks.

Offering services on decentralized protocols will become increasingly competitive, and now’s the time to get ahead.

Decentralization is seldom valuable.

Decentralization is the key property that differentiates blockchains from legacy information-technologies. Among other things, it greatly lowers the barrier to access basic financial products and other networked services, reduces dependencies on any single actor, and increases the speed of disseminating (and pricing) information at global scale.

Decentralization as a feature is a new frontier for internet applications, but it is a blessing and a curse. It also creates additional (operational and development) costs and game theoretical risks, and introduces higher round trip latency due to the nature of achieving consensus and therefore births MEV. In fact I’d argue, more often than not, “decentralization” is unnecessary, and in crypto it’s been abused; there are more cases in which a network or service does not need to be decentralized, or decentralizes too much (or too early), which produces negative externalities and additional problems, such as precipitous secondary market price discovery and out-of-control ineffective DAO spending.

For example, Ethereum’s ICO and low hardware requirements produced a wildly successful outcome. Today, Ethereum has the largest network effects in all of crypto, and it is commonly referred to as the most decentralized and secure generalized smart contract L1. However, it also suffers from lacking a unified vision, leadership, and productive development cycles – all of which seem to be shifting after years of fairly stagnant progress, especially relative to its competitors – in large part due to how decentralized the network is.

In a recent talk, when asked, “if you could do anything differently, what would it be?” Helium Foundation CEO Scott Sigel expressed wishing to have been less decentralized to start because of the additional overhead costs and complexities and the lack of return associated with those additional inputs. To use Moxie’s words, “the ecosystem is moving,” and to compete in crypto (and tech more broadly) over a long time horizon, one could roughly depend on the following heuristic: the more decentralized a system is, the more inflexible and immobile it becomes. One should be careful about decentralizing their protocol and, if they decide to decentralize, be very intentional on why, the design, and its features. It is a credit to Solana teams that they understand this point while also continuing to sail toward further decentralization.

That said, given its sitting at over $200B FDV, I think Ethereum (and crypto more generally) proves the concept that decentralization is extremely valuable, and the past several years of experimentation in crypto have made it evident that more strategic thinking around decentralization is necessary. Furthermore, developers can greatly benefit from having the ability to build decentralized protocols from scratch.

Decentralization is valuable under certain circumstances.

I think the value of decentralization is attributable to three primary properties:

Broadly, there is a problem of trust in traditional contexts–distributing some function across a set of actors improves trustlessless and security (and in some cases the performance) of a protocol by diversifying operational risks, reduces the necessity to trust any single actor, and imbues a valuation premium.

There is a structural arbitrage between the price of offering and scaling particular services in a decentralized manner and the price traditional centralized incumbents set to access or participate in those services.

Tokens are a unique crypto-native incentive mechanism to bootstrap and scale decentralized networks.

Whether or not a protocol can benefit from (1) is a measure of the amount of (prospective) value being processed. More concretely, it is much more valuable to decentralize a protocol that processes $100mm than a protocol that processes $1mm (or potentially nothing).

(2) is a fairly basic equation with more or less fixed inputs and outputs e.g. you can technically offer wireless internet at a much lower cost than incumbents.

And (3) (the tokenomics) is much more complicated. There is no great standard for it, and in a market where the perception of a project is – for better or worse – tethered to the performance of its underlying token, tokenomics is arguably more important (or at least the final boss facing decentralized systems).

In fact, I’d wager that tokenomics are more descriptive of bad outcomes than good outcomes – meaning, the costs and risks of having bad tokenomics are way higher than the benefit of having great tokenomics.

While complicated, I think decentralizing a service can be translated into three main questions:

Should you decentralize a service? Assuming answering yes entails increased security for tangible value creation, the next questions are:

How should you decentralize it? Usually a consensus model, such as Proof of Stake or Proof of Authority, and a token as an incentive mechanism and therefore tokenomics are involved.

How much should it cost? It depends on the product and service.

To set the stage for addressing 2 and 3 in a future article, which will also include a more in-depth technical discussion of the restaking protocol, the next section discusses point 1, because assuming both decentralization has intrinsic value AND there is something valuable enough to secure, is a bad assumption.

Why turn a service into a decentralized protocol?

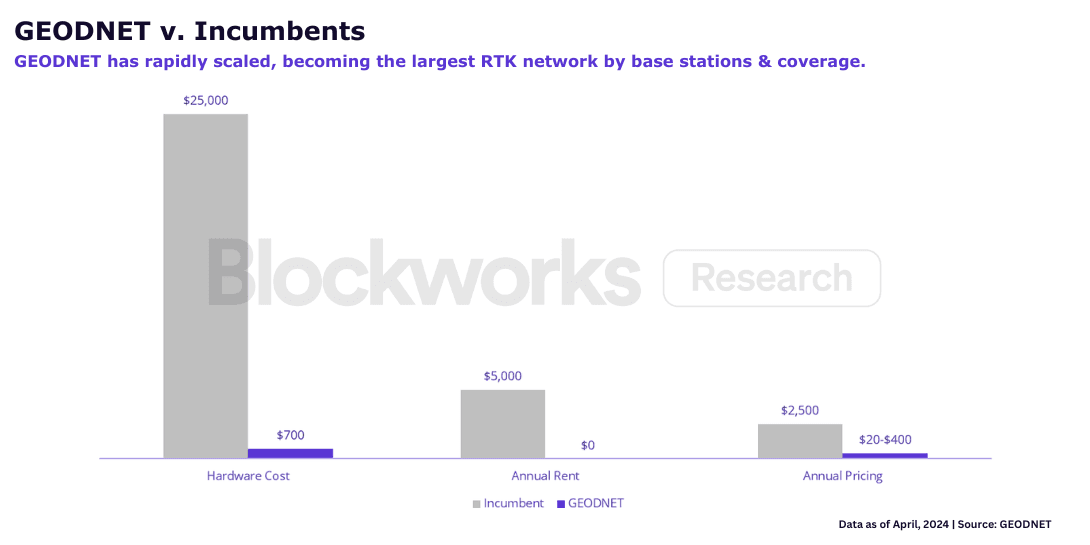

It makes sense to decentralize a service into a protocol if the technology enables an arbitrage. DePINs are a great example of this. Time and time again, DePINs are gaining real world traction because decentralizing particular services inverts the cost-structure of traditionally capital-intensive networks. For example, it costs only $700 to deploy a Real Time Kinematics (RTK) station on Geodnet vs $12-25k with incumbents, and therefore the cost for accessing these services is an order of magnitude less expensive. Additionally, in DAWN, user owned-wireless allows average gigabit Internet prices to be in the $10-30 price range vs $100+ price range. But it does not stop there.

If anyone can participate in the network and run a device, how does the network verify that devices are offering high quality work? How does it enforce good work (and penalize bad work)? How much is the network spending on this verification?

DePINs

DePINs are an interesting category for restaking because they uniquely benefit from each value proposition of decentralization: pricing, trust, and tokenomics. In particular, each DePIN is supposed to offer a service at a lower cost, requires some verification mechanism to prove that in-network devices are providing high quality services, and utilizes tokens and tokenomics to bootstrap the network and maintain its quality.

Verification is achieved by running some kind of proof of [insert here] protocol, such as proof of location or proof of bandwidth. Normally, DePINs rely on a p2p network to run these verifications, meaning, devices in the network are responsible for receiving work, completing work, and verifying each other’s work. The results of these verification processes inform network emissions and policies.

For example, for Geodnet, a proof of location protocol would inform increasing (or decreasing) network incentives to specific regions based on participation and demand. If there is more demand for RTK services than supply (or vice versa) in a region, Geodnet could distribute more emissions in that region to incentivize more participation. Or, for Kuzco (a distributed GPU marketplace), a proof of inference protocol would verify generations are run correctly and mitigate false generations, and therefore reward honest performant workers and penalize fake workers.

However, according to his Solana DePIN report, even though on-chain revenue is sitting at $6M, over $400M was emitted to DePIN participants. Distributing emissions to everyone for doing the same work is questionable and appears to be extremely cost-ineffective. This model also does not scale as well as dividing tasks across specialists. In order to transition to a more scalable verification network for Helium Mobile, Helium introduced a higher level validator set of oracles (run by Nova Labs) to verify proof of coverage.

Verification Protocols for DePINs

Rather than running these verification mechanisms in a p2p fashion, DePINs could benefit from separating execution (providing RTK services, AI inferencing, or wireless mobile) and verification into two distinct pipelines. In the context of L1 blockchain architectures, this separation of verification (consensus) and execution is called asynchronous execution. By creating distinct stages for verification and execution, blockchains can maximally utilize computer resources to increase bandwidth and reduce latency. Similarly, outsourcing the verification process to a different set of node operators, DePINs can maximally utilize network resources specifically dedicated to the service they’re providing, reduce the total cost of emissions paid to the network, and mitigate things like spam and sybil. It allows networks to have more precision and observability on the efficiency, cost, and upgradeability of each task.

In addition, today the standard for DePINs is to stack multiple verification protocols, e.g. DAWN is expected to run proof of location, proof of bandwidth, and proof of frequency to verify devices are performing properly and scale the network. It is possible that, rather than having to deploy a unique network specific instance of each verification protocol (in which everyone in the network must participate), DAWN could tap into an external proof of location protocol, for example. In other words, DAWN could benefit from a DePIN version of “asynchronous execution,” i.e. separating verification from executing services. If a set of specialized nodes were running proof of location and another set of specialized nodes were running proof of bandwidth, then DAWN could outsource verification for both properties, get market pricing for each of them, and therefore optimize the network’s services and costs around them. It could improve performance monitoring and scaling and reduce the total cost of emissions.

In particular, by separating verification from executing services, DePINs can leverage more sophisticated (re)staked validator sets to (1) validate the topology of specific properties (such as location and bandwidth) and (2) vote on the state of all resource allocation for the underlying network.

Redundant Services

Another less obvious reason to decentralize a service is the additional incremental cost of paying for redundancy (i.e. paying others to run the service) is relatively low compared to the amount of value it secures. Effectively, networks can pay a small cost for stronger security and performance i.e. censorship-resistance, trustlessness, uptime, etc.

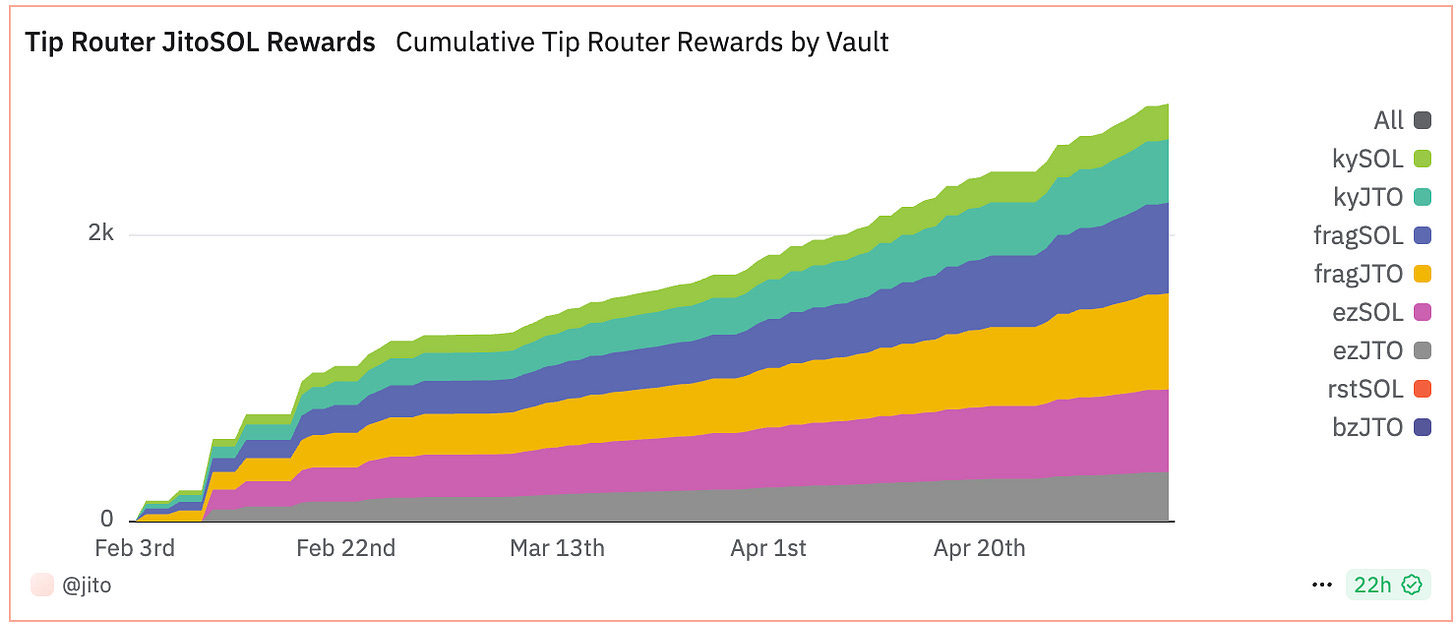

E.g. TipRouter

For example, TipRouter decentralizes the distribution of over a billion dollars (annualized) of MEV tips across the Solana ecosystem. Before TipRouter, Solana validators delegated this task to Jito Labs; while sufficient, it created a potential single point of failure and presented additional operational overhead and “trust me bro” assumptions for Jito Labs.

Decentralizing this task to a set of Solana full node operators strengthens the security of this large value distribution and greatly reduces trust assumptions. Skeptics might challenge the notion of paying external operators to participate in consensus for a task that is otherwise cheaper (and less redundant). The reasons are: there is value in depending on a network of nodes to facilitate this ongoing transfer of value vs a single node, there is value in standardizing this feature of Solana, and there is value in installing more legitimate utility for JitoSOL and JTO i.e. installing stronger tokenomics.

To date, TipRouter has paid roughly $500k to its set of stakers and 13 node operators contributing to consensus on distributing approximately $167M in MEV tips. And JIP-16 approved an upgrade that will enable any validator to use it to distribute priority fees. As a result, TipRouter greatly improves the security and resiliency of MEV tip distribution at a low cost and will soon have the ability to do so for priority fee distribution on Solana.

E.g. “Cronchain” (aka make Clockwork great again)

Another example of a task that can be decentralized is on-chain cranking i.e. basically an on-chain cronjob network, similar to TukTuk or Clockwork (which was sunsetted). Today, Jito Network operates a permissionless cranker to update the state of its products, including the vault program in the staking protocol. While this technically suffices and paying for an external redundant service to execute this task might seem unnecessary, outsourcing this work to a set of distributed workers would strengthen the resiliency of the on-chain operation. This is an example of a service that does not make sense until a critical mass or threshold of economic value has been reached; a redundant service at a small additional cost is worth it if the cost is orders of magnitudes less than the value of the underlying network. Perhaps Solana has reached this economic threshold to make Clockwork great again.

Final thoughts

All in all, decentralization for the sake of decentralization is a poor reason to decentralize a protocol. In most cases, it is a strategic benefit to start more centralized, focus on product, and incrementally decentralize. This is the mentality for most teams on Solana which has been generally very bullish for the ecosystem.

However, I’d argue, the grander underlying trend of decentralization is your friend. If you prioritize product and reach a point where you are printing money, next is prioritizing decentralization, if the value is there, because as the demand for the product increases, so will the need and value of hardening its resiliency. Or you risk getting eventually beat by a competitor that does. Decentralize or die. In fact, as I write this, Beaverbuilder, one of Ethereum’s largest centralized block builders, made an announcement about decentralizing its block builder.

For many projects, it is a strategic medium and long term benefit to begin thinking about decentralization. Recent years, in and outside of crypto, have proliferated the demand for decentralized products and services, and Jito (Re)Staking is a framework to decentralize [insert here] protocol, directly on Solana.

If you value decentralization, or you are entering a phase in which decentralization adds a tangible benefit to your network and users, please get in touch. We are white gloving high impact NCN development.